Webinars

The Digital Development Unit of the Information Society Department organises a series of webinars on the impact of digital technologies on human rights, democracy and the rule of law, in support of initiatives by Council of Europe sectors. These webinars are an opportunity for experts from different backgrounds to meet and discuss issues related to the digital transformation of society.

The Digital Development Unit of the Information Society Department is also recording a series of webinars (Digital Development Online Events) highlighting Council of Europe initiatives addressing challenges resulting from the digitalisation of people’s lives. These webinars are an opportunity for interested audiences from different fields of expertise to meet and discuss opportunities and risks emerging from the digital transformation of societies, and in particular the impact on human rights, democracy and the rule of law.

The AI&Law series, co-organised by the Council of Europe and the University of Strasbourg (UMR DRES), are regular meetings open to a wide public, public decision-makers, officials from international organisations and national administrations and academics, whose aim is to measure the stakes of subjects at the frontiers of digital techniques and the practice of law.

Behavioural addictions facilitated by information and communication technologies – risks and perspectives

Over 5 billion humans use internet worldwide. Undoubtedly this technology is bringing about many advantages. At the same time concerns are growing about the potential detrimental effects resulting from excessive smartphone use. It is important to understand that the likely culprit for over-usage is not the smartphone but the excessive use of applications from the internet.

As the current business model of many applications is based on making available personal data in exchange for the right to use an app, it is not surprising that many programme design elements can be found in social media apps and free games that aim at prolonging the time users stay connected. Nearly all of the widely used apps and social media platforms contain these elements that seek to prolong usage time.

Growing research evidence shows that this can provoke compulsory app and social media use leading to behavioural addictions. It is therefore time to critically reflect on the effects of this business model ‘data in exchange for app-use’, as well as the applied technological and psychological mechanisms, on individuals and societies. The challenge will be not to limit smart phone use, but to regulate certain design elements in apps to come up with less addictive products. And above all, ideas for new currency to pay for using an app service need to be explored that can replace payment by attention.

Speakers

- Yves Citton, professor in Literature and Media at the Université Paris 8 Vincennes-Saint Denis (France)

- Antti Järventaus, development manager at Save the Children (Finland) | PPT presentation

Summary to be published |

Recording

AI and Sandboxes

AI is in the centre of the fourth industrial revolution. This new disruptive technology is extremely pervasive, and it represents a challenge for the society as whole. Its huge impacts on human rights, democracy and rule of law required an urgent coordinated global action. While in Italy public debate and awareness need to be implemented at societal level, Japan presents a strategy based on the principle of human-centric AI. Three are the pillars on which the latter strategy is designed: dignity, diversity and inclusion and sustainability to create society 5.0. In a democratic society the hope is to have a beneficial AI.

This webinar has been organised with the support of Japan.

Speakers:

- Deborah Bergamini (Italy), Rapporteur of the Parliamentary Assembly of the Council of Europe for the report “Need for democratic governance of artificial intelligence”

- Hiroaki Kitano, Ph.D. (Japan), President & CEO of Sony Computer Science Laboratories, Inc., CEO of Sony AI, Inc. | PPT Presentation

- Armando Guío Español (Colombia), Consultant of the Development Bank of Latin America and author of the Colombian Ethical framework on AI

Service Robotics and Human Rights

The digitalisation of our lives brings challenges and opportunities. One of the aspects of such digitalisation is the use of artificial intelligence systems, and in particular – intelligent service robots, which sometimes augment or replace us in interactions with other humans.

In certain contexts, the use of such robots may pose questions which are relevant from the human rights' perspective. When can their use foster or hamper human dignity and the right to privacy? How can their use affect other fundamental rights?

Renowned experts were invited by the Council of Europe to discuss the interplay between human rights and the use of service robots in the webinar format.

The event took place on 5 July 2021 on the sidelines of the 5th plenary meeting of the Council’s Ad-hoc Committee on Artificial Intelligence (CAHAI), which is currently examining the feasibility and potential elements of a legal framework for the development, design and application of artificial intelligence systems.

Speakers:

- Dr. Susanne Bieller (Germany), General Secretary of the International Federation of Robotics

- Hiroaki KITANO, Ph.D. (Japan), President and CEO of Sony Computer Science Laboratories, Inc., and CEO of Sony AI, Inc.

- Stephen Wu, J.D. (United States), Attorney and Shareholder of the Silicon Valley Law Group, Chair of the American Bar Association Artificial Intelligence and Robotics National Institute

- Aimee van Wynsberghe, Ph.D. (Germany), Alexander von Humboldt Professor for Applied Ethics of AI, University of Bonn, President of the Foundation for Responsible Robotics

Human rights and legal aspects of blockchain technology

On 9 March 2022, from 2 pm to 3h30 pm CET, the Council of Europe’s Digital Development Unit held a webinar on the human rights and legal aspects of blockchain technology.

The event was organised around the study on the topic, commissioned by the Council of Europe and performed by prominent law and technology researchers:

- Florence G'sell, University of Lorraine,

- Florian Martin-Bariteau, University of Ottawa.

The authors of the study revealed key takeaways from their draft research paper, including key policy considerations relevant for the Council of Europe.

The webinar included a discussion around key arguments of the study, involving:

- Souichirou Kozuka, Gakushuin University,

- Michèle Finck, University of Tübingen

On Wednesday 11 January 2023, the Secretariat of the Committee on Artificial Intelligence (CAI) held a webinar organised with the kind and generous support of the Government of Japan, on the margins of the 3rd Plenary meeting of the CAI.

The event brought together experts from across the world to discuss the challenges of developing international (EU Commission, OECD, UNESCO) and national (Canada, Japan, UK, the USA) risk and impact management frameworks as well as the challenges of making them mutually interoperable.

Moderator:

Mr Sebastian Hallensleben (CEN CENELEC)

Panellists:

- Mr Omar Bitar (the Treasury Board of Canada)

- Ms Doaa Abu Elyounes (UNESCO)

- Professor Arisa Ema (University of Tokyo, Japan)

- Mr Kilian Gross (the European Commission, DG Connect)

- Professor David Leslie (Alan Turing Institute, UK)

- Ms Elham Tabassi (NIST, USA)

- Ms Karine Perset (OECD)

On Wednesday 1 February 2023, the Secretariat of the Committee on Artificial Intelligence (CAI) held a webinar organised with the kind and generous support of the Government of Japan, as a side event the 4th Plenary meeting of the CAI.

The event brought together national and international experts from across the world to discuss the current challenges and opportunities for AI in the sphere of sustainable development and environment.

Host:

Ms Louise Riondel (Co-Secretary to the Committee on Artificial Intelligence)

Moderator:

Mr Patrick Penninckx (Head of Information Society Department, DG I, Council of Europe)

Keynote speaker:

Mr David Eray (Minister of the Environment of the Canton of Jura in Switzerland and Spokesperson on Digitalisation and Artificial Intelligence of the Congress of Local and Regional Authorities of the Council of Europe)

Panelists:

- Mr Peter Clutton-Brock (Executive Director and co-founder of the Centre for AI and Climate, UK)

- Ms Golestan Radwan (Chief Digital Officer, UNEP)

- Mr Andrew W. Wyckoff (Directorate for Science, Technology and Innovation (STI), OECD)

- Mr Yoshiki Yamagata (Keio University, Japan)

On Wednesday 19 April 2023, the Secretariat of the Committee on Artificial Intelligence (CAI) held a webinar on AI and gender as a side event the 5th Plenary meeting of the CAI.

The event brought together national and international experts from across the world to discuss the alarming negative impact of AI systems on gender equality, the reasons behind this reality and how to mitigate such risk, as well as the opportunities brought by properly designed and regulated systems for advancing gender equality.

Host:

Ms Louise Riondel (Co-Secretary to the Committee on Artificial Intelligence, Digital Development Unit, Council of Europe)

Moderator:

Ms Caterina Bolognese (Head of the Gender Equality Division, Directorate of Human Dignity, Equality and Governance, Council of Europe)

Keynote speaker:

Ms Jana Novohradska (Ministry of Investments, Regional Development and Informatization of the Slovak Republic and Gender Equality Rapporteur of the CAI)

Panelists:

- Ms Ivana Bartoletti (Global Chief Privacy Officer at Wipro, Visiting Cybersecurity and Privacy Executive Fellow at Pamplin Business School at Virginia Tech, Co-Founder of the Women Leading in AI Network)

- Ms Hadas Orgad (Ph.D. candidate at the Technion, Israel Institute of Technology)

- Mr David Reichel (EU Agency for Fundamental Rights)

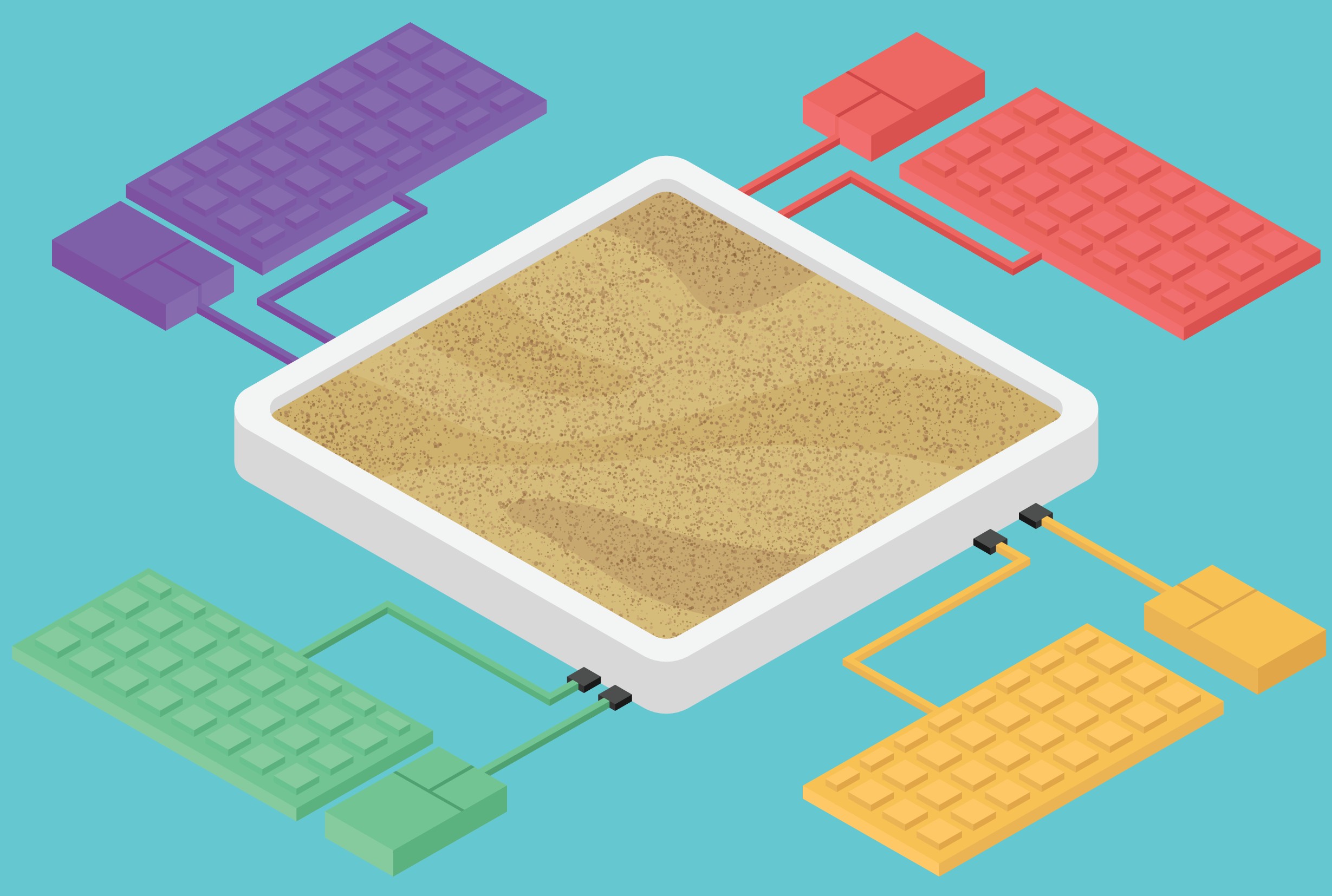

AI Sandboxes: striking a balance between regulation and innovation

Regulatory sandboxes” are closed testing environments in which participating companies can experiment with innovative business models or products with a reduced regulatory burden or expedited regulatory decisions.

“Regulatory sandboxes” come in many shapes. The term itself often has various connotations. Sandboxes may be adopted in order to support innovation and the development of innovative new products, services or business models. They may contribute to fostering a services system that is more efficient and manages risks more effectively. By using sandboxes, regulators may also gain a better understanding of how emerging technologies and business models interact with the regulatory framework.

The webinar will draw on the experiences of the already functioning AI sandboxes in Norway, Spain, Switzerland and input from industry with a view to exploring various advantages they offer, consider risks and costs associated with those advantages and propose best practices that policymakers could use to mitigate them.

On Wednesday 31 May 2023, from 1 to 2pm (CET), the Secretariat of the Committee on Artificial Intelligence (CAI) is holding a webinar (hybrid/Zoom + AGORA G02) as a side event of the Sixth Plenary meeting of the CAI. It is thematically linked to the examination of the draft Council of Europe [Framework] Convention on AI, Human rights, Democracy and the Rule of law, which the Committee will discuss during the meeting.

The event brings together national and international experts from across the world to discuss the current challenges and opportunities in the field of AI.

Host

Ms Louise RIONDEL - Co-Secretary to the Committee on Artificial Intelligence

Moderator

Mr Patrick Penninckx - Head of Information Society Department, DG I, Council of Europe

Panelists

Mr Alberto GAGO FERNANDEZ - Cabinet of the Secretary of State of Digital and AI, Spain

Ms Laura GALINDO˗ROMERO - Open Loop Project, Meta

Mr Eirik GULBRANDSEN - Sandbox for Responsible Artificial Intelligence, Norwegian Data Protection Authority

Mr Raphael VON THIESSEN - AI Sandbox, Canton of Zurich, Switzerland

Three experts have discussed on the topic of predictive policing and rule of technology. Marine Kettani, policy officer at the Ministry of Justice (France), Christopher Markou, Leverhulme Fellow and Lecturer (United Kingdom), Antoinette Rouvroy, Senior Researcher at the University of Namur (Belgium), will analyse the current state of affairs in Europe and share their views on the consequences of the increasing use of algorithms in public policies. The webinar has been opened and closed by Rik Daems, president of the Parliamentary Assembly of the Council of Europe (PACE).

Three experts have been invited to discuss on the certification of algorithmic systems. Arisa Ema, PhD, Project Assistant Professor at the University of Tokyo (Japan), Nicolas Economou, Chief executive of H5 and chair of the Law Committee of the IEEE Global Initiative on Ethics of Autonomous and Intelligent Systems (United States) and Yaniv Benamou, PhD, Of Counsel Attorney, Lecturer at the University of Geneva (intellectual property, digital privacy and technology law) (Switzerland) shared their views on this modality of regulation.

The webinar has been opened Lord Tim Clement-Jones, Former Chair of the House of Lords Select Committee on Artificial Intelligence (2017-2018) (United Kingdom) and closed by by Thibault de Ravel d’Esclapon, PhD, deputy-director the research federation « L’Europe en mutation » (University of Strasbourg).

The 8th edition, broadcasted on 10 December 2020 (14h30-16h30), addressed the profound impact of AI, and decision-making algorithms, on human rights. Human rights are presented as the basis of AI regulation work by most international organisations, due to the impact of this technology, both from an individual and collective point of view.

Discrimination, infringement of decision-making autonomy, infringement of privacy and freedom of expression are some of the risks commonly evoked and today taken into account in the work of most regulators. But other societal impacts are also to be considered: the progressive meshing of our lives by algorithms is not insignificant and a new model of society seems to be emerging.

The objective of this webinar was to explore this deeper impact resulting from the digital transformation that we live and examine how regulation could prevent harms and damages on individuals and the whole society. Excerpts from the documentary iHuman illustrated some of the challenges encountered.

Speakers

- Tonje Hessen Schei, Film Director, producer and Screenwriter (Norway)

- Pr. Karine Gentelet, Associate professor at Université du Québec en Outaouais and Abeona-ENS-Obvia Chair on Artificial Intelligence and Social Justice (Québec)

- Nathalie Smuha, Researcher – Department of International & European Law, KU Leuven (Belgium)

Summary to be published |

More information |

Recording

This webinar focusses on the role artificial intelligence techniques such as facial recognition play in the criminal justice system. Deep learning techniques of this type promise to make it considerably easier to identify people from pictures and can be a real boon to police departments, provided the identification is technically reliable and fair – and the person in question was already a suspect before they were identified.

Currently a hot topic, video surveillance coupled with a facial recognition system is gaining traction in Europe while several North American cities have not only stopped using facial recognition, some have prohibited it. Does video surveillance create suspects? Is the fact that someone is present in a specific place – predetermined by the police – constitute reasonable grounds on which to launch a criminal investigation? Is using such combined surveillance and recognition systems a fair and effective way to fight crime?

Clear regulations have been adopted in recent decades to govern the use of investigative measures based on specific techniques such as telecommunications interception, using GPS to track vehicles, and installing surveillance cameras in homes. In Europe, the use of such techniques is reviewed by the European Court of Human Rights. Should similar rules apply when facial recognition is used to identify people?

Turning the mere identification of someone into grounds to investigate or proof of a crime is a legally delicate endeavor. Identification by a witness is governed by rules of criminal procedure. Witnesses must testify and be questioned by defense counsel before a judge, and in some cases their testimony cannot be admitted as evidence. Does AI testify? Does it provide an expert opinion? Can defense counsel question it, or impugn its reliability? Do otherwise benign observations produced by AI, such as that a driverless car was on the road, play a role in criminal proceedings?

Speakers

- Thomas Lampert, Ph.D., Chair of Artificial Intelligence and Data Science, University of Strasbourg

- Kate Robertson, Criminal and regulatory litigator, Markson Law, Toronto

- Sabine Gless, Ph.D., Professor of Criminal Law and Procedure, University of Basel

Summary to be published |

More information |

Recording

This webinar is dedicated to the generalisation of deepfakes (or cheapfakes) and the automatic creation of textual content, such as GPT-3.

Surprising, playful, but also opening up the possibility of an industrialisation of disinformation, these technologies have largely been treated separately in the generalist media. Their combination is more rarely studied, and the possibility to create easily such content leads to risk of invasion of public space, including social networks.

Are these fears overestimated? What are the technical answers to filter such contents? What are the legal responses? This webinar will present practical and concrete examples of these technologies and will enable a panel of experts to discuss the important issues at stake, such as data protection, the fight against cybercrime and the fight against disinformation.

Speakers

- Sebastian Hallensleben, Ph.D., VDE e. V., Head of Digitalisation and Artificial Intelligence, Germany

- Karen Melchior, Member of the European Parliament, Denmark

- Pavel Gladyshev, Ph.D., Assoc. professor at the University College Dublin, Ireland

Summary to be published |

More information |

Recording

List of previous AI&Law webinars

- Meetings #01 to #04 were provided in form of conferences and were not recorded

- #05 - Myths and realities of tracing applications | 16 April 2020 |

Summary |

More information |

Recording